Top 110 Data Science Interview Questions (Updated for 2025)

Overview

Most data science interviews are tough for one reason: there’s no consistency on what types of questions get asked. Therefore, you need to prepare for the interview and practice a wide variety of data science interview questions.

The questions you’ll get asked are highly dependent on the position and company you are interviewing with. Some companies view data scientists as high-powered data analysts– in which you’d expect to be asked more SQL questions– while others look for data scientists with strong ETL and data engineering skills.

What comes up most frequently? Interview Query analyzed the most commonly asked questions in data scientist interviews by subject:

Overall SQL questions, machine learning, data structures and algorithms, and statistics and A/B testing interview questions dominated the data science interview.

SQL is most important topic. It’s asked in more than 90% of data science interviews. Machine learning (85%+) and Python (80%+) are also frequently asked topics.

How to Use Our Data Science Interview Question Guide

To help you study, we’re going to run through the Top 110 data science interview questions. All of these are real questions asked in data science interviews at companies like Facebook, Google, Amazon, and more.

We highlighted the most important interview questions in nine categories. Of all nine, SQL is of highest significance, as they are asked in more than 90% of interviews, as are machine learning (85%+) and Python (80%+). Explore this guide by question category. Explore questions by category:

Data Science Behavioral Interview Questions

In data science interviews, behavioral interview questions are meant to assess technical competency, culture fit, past experience, and communication abilities.

1. Give an example of an analysis that you did that drove business impact.

Provide a concrete example and use statistics to back up your claim. You might talk about an increase in user engagement or improved marketing performance.

Be sure to structure your answer. The STAR format (Situation, Task, Actions, Results) is a go-to for many data science interviews. First, you outline the situation: “In my last job, I was working as a marketing analyst.” Then, highlight the task: “Marketing performance had stagnated, and we wanted to increase our marketing ROI.”

Finally, state the actions you took and the results: “I decided to do in-depth analysis on your audience targeting and found more profitable demographics to target. My analysis resulted in a 10 percent increase in marketing ROAS.”

2. How do you make technical topics accessible to non-technical audiences?

Behavioral questions in data science interviews are commonly used to assess your communication style. This question will help the interviewer understand how well you communicate complex topics. One way to answer this is to talk about data visualization and how you have created visualizations that made your insights more understandable.

3. Tell me about a data science project you have worked on. What challenges did you experience? How did you respond?

Be prepared to talk about any past data science projects that you have worked on. These could be professional projects or ones you have done in your free time. Practice talking about the project using the STAR format (or something similar), and always incorporate the challenges of the project.

Was there a challenge in gathering and cleaning the data? Did you have trouble generating insights? Was communicating the insights difficult?

4. Provide an example of a goal you have achieved. How did you reach it?

A behavioral question like this is designed to understand your learning style, organization, and planning skills. First, define the goal. If you’re interviewing for your first data science job, you might choose a goal like learning to code in Python. Or, you might choose a professional goal like completing a project on a tight deadline.

After you’ve provided an overview of the goal, you can use a format like STAR to outline your exact thought and action process.

5. Tell me about a time you missed a deadline. How did you respond?

Inevitably, questions like this will arise in your interview. These are specifically designed to see how you respond to adversity. Be honest in your response, but also be aware of the lessons you learned and how you applied (or would apply) these lessons in future scenarios.

Let’s say the missed deadline was a result of having unclear expectations at the start of the project. You might say, “I learned how important it is to get stakeholder input and nail down requirements before starting a project.” Then, talk about a time you were able to apply those insights or how you would apply them to a future project.

6. How have you used data to elevate the experience of a customer?

Customer-related behavioral questions are common in Amazon data science interviews. This question you assesses that you can see problems through the customer’s eyes, and develop data-driven methods for helping make customer’s lives easier. You might say:

“In my previous job, I helped to build a predictive model that accurately identified potential customer service problems in real-time. Using the model, our customer success team would be alerted if a customer was experiencing a difficulty, e.g. unhappy with our chatbot process, having difficulty finding relevant information, difficulties in using our system. Using the alerts, the team could then reach out to customers in the moment and were able to resolve issues more quickly. As a result, the team reduced churn and improved customer satisfaction scores.”

7. Provide an example of a goal you did not meet. How did you handle it?

Here’s a sample answer to our Data Science Behavioral Questions guide:

“In my last position at an e-commerce company, I was given a goal of developing a system to predict customers who were most likely to churn, which the company could then use to send personalized promotions to head off those customers. The goal was to increase customer retention by 20% and revenue by 10% over one year. Unfortunately, I missed that goal, with retention only increasing by 10% during the desired timeframe. I wanted to understand what went wrong, so I focused on improving and optimizing the prediction algorithm while also working with the marketing team to better personalize the offers.” If true, let the interviewer know if your response helped you accomplish additional goals set for you in the future.

8. How do you handle tight deadlines?

Start with something like, “Yes, I’m experienced working on deadlines and maybe the best way to show is with an example.” Then, walk the interviewer an example like this:

- Start by outlining the project you worked on and the critical deadline you had to meet. “We needed to install a new hardware component for a critical launch in two weeks.”

- Help the interview understand the urgency of the deadline. “This component was critical for launch; without it, we wouldn’t be able to introduce a new feature that the marketing team had been teasing for months.”

- Tell the interviewer your plan and methods used. “I developed a day-to-day schedule for implementing the hardware component, outlined with tasks that needed to be completed each day. I communicated the plan to the engineering team to gain buy-back.”

- Finally, show the result. “The schedule, which included a daily task list, made it easy to measure progress and work ahead. Ultimately, we were able to introduce the component 2 days early, which allowed plenty of time for additional testing.”

9. What did you enjoy most about your last job?

A few tips for this question: 1) Keep it professional (don’t say, I really “liked the company software picnics”), 2) choose an example that aligns with the company you are interviewing with, 3) provide details.

Here’s a sample answer from LiveCareer:

“In my last job I was a member of a five-person team, and we worked together on a variety of large-scale projects where each of us was responsible for one part of the project. I really enjoyed the teamwork aspect of the job, and liked seeing how all the pieces of our projects came together in the end. The teamwork aspect of that job — and this job — is something that greatly appeals to me, because I typically end up learning a lot about what I’m capable of when working in synch with others.”

10. Tell me about a time you had to clean and organize a big dataset.

A good clarifying question would be: “What do you consider a large dataset?” This won’t necessarily change your answer, but it will show that you’re detail-oriented. Note: If you haven’t worked with a “large” dataset, choose a project with a smaller dataset that required a lot of data cleaning and describe how you might scale what you learned to a larger dataset.

A framework you could use for this question:

- Describe the problem. Was this a classification, clustering, regression, etc. problem?

- Describe the dataset. What challenges were specific to the dataset, e.g. duplicates, missing data, you data labeling issues?

- Describe the cleaning techniques you used. Talk about the methods you used, and why you used them, e.g. Imputation by KNN, deduplication, etc.

- Describe the challenges. Talk about the challenges you faced, and the methods you used to overcome them.

11. How do you learn new data science concepts? Tell us about a time when you had to learn a new skill.

Start with a framework:

- I moved to a new department in my last job. Previously, I had been focused on operations analytics, and in the new department, marketing analytics was the focus.

- My experience with marketing analytics was limited. So I created a one-week plan to get myself up to date on the project.

- During that week: I read all of the existing documentation, I created a list of questions for the department head, had a chat with the data scientist I was replacing, and I took a self-paced 5-hour marketing analytics course.

- After the week, I was able to jump into the day-to-day tasks, and scheduled a regular weekly meeting with my supervisor to answer questions and plot out what was coming next, so that I could prepare and learn in advance.

12. What was your greatest accomplishment in your last job?

Your answer to this question should focus on results. An example response could be:

“When I joined my last job, the company had severe conversion rate issues. The marketing team was bringing in qualified leads, but they weren’t converting customers at a rate that would be profitable. They hired me as an analyst to determine what was going wrong and advise them on steps that they could take to correct the issue. I created a 3-month plan of attack. Phase 1 was analysis. Phase 2 was recommendations and prioritization. And Phase 3 was the roll-out and maintenance. Through my analysis, I uncovered issues in customer experience, customer segmentation, and messaging. After rolling out my recommendations, we were able to increase conversion rates by 200%.”

13. How comfortable are you presenting your insights?

Interviewers want to know you’re confident in your communication skills and can effectively communicate complex ideas. With a question like this, walk the interviewer through your process:

- How you prepare data presentations

- Strategies to make data accessible

- What tools you use in presentations

Product Metrics and Analytics

In data science interviews, product metrics and analytics questions assess your product intuition and ability to make data-driven product decisions. Data science metrics questions ask you to investigate analytics, measure success, track feature changes, or assess growth. Sometimes, they take the form of data analytics case studies.

14. Friend requests are down 10% on Facebook. What would you investigate?

See a video mock interview for this product sense question:

See our guide to Facebook data science interview questions.

15. Average comments per user has dropped over a three-month period, despite consistent growth after a new launch. What metrics would you investigate?

This decreasing comments product question assesses your product intuition. Here’s a sample solution:

You’re provided information that the user count is increasing linearly. Therefore, the decrease isn’t due to a declining user base. You might want to look at churn and active user engagement to solve this problem.

Active users will churn off the platform, resulting in fewer comments. In this case, you might be interested in the metric: comments per active user.

16. You work for an SAAS that provides leads to insurance agents and are asked to determine whether providing more leads to customers results in greater retention.

Let’s assume that, for this question, the VP of Sales provides you a graph that shows agents at Month 3 receiving more than three times the number of leads than agents in Month 1 as proof. What might be flawed about the VP’s thinking?

Hint: The key is to not confuse the output metrics with the input metrics. In this case, while the question makes us think that more leads is the output metric, what should really be investigated is churn. If you break out customers in cohorts based on the number of leads per month, we can see if churn goes down by cohort each month.

17. How would you verify the claim that Facebook is losing younger users? What metrics would you look at?

With product sense questions in data science interviews, always start with clarifying questions. With this Facebook product data science question, you could ask where the claim came from, and a definition of “younger” user. “Churn” and “active users” are two metrics that would help you verify the claim. In particular, you should be interested in daily, weekly, and monthly rates for both metrics.

By looking at daily, weekly, and monthly churn, we’d have a better grasp on whether younger users are less engaged, e.g. higher weekly churn vs. lower monthly churn, or if Facebook is actually losing younger users.

18. A PM tells you that the weekly active user (WAU) metric is up 5% but email notification open rates are down 2%. What would you investigate to diagnose this problem?

When initially reading this question, we should first assume that it is a debugging question, and then possibly dive into trade-offs.

WAU (weekly active users) and email open rates are most of the time directly correlated, especially if emails are used as a call to action to bring users back onto the website. An email opened and then clicked would lead to an active user, but it doesn’t necessarily mean that they have correlations or are the only factor causing changes. First, you might want to look at debugging: looking at problems in tracking, seasonality, open rates by country/demographic, etc.

If this doesn’t reveal a solution, you’d next want to consider trade-offs.

19. How would you measure the success of Uber Eats?

The first thing to answer with this question is: “What is success?” Ask clarifying questions like: Would success mean bringing new users onto the platform? Would it be converting users who have been using Uber for ridesharing? Is it new restaurant growth?

Additionally, you’d want to look at metrics like:

- Number of rideshare and uber eats users / number of rideshare only users

- Number of unique orders per users per week / month

- Number of users making second order / users making first

20. Pinterest introduced a new feature: video pins. How would you determine what percentage of pins should be videos?

Questions like this are common in Pinterest data science interviews. Specifically, this question says the goal is to increase user engagement and the total audience size, and that you are tasked with measuring the impact of video pins on the user experience.

Hint: Since the it’s related to user engagement and total audience size, you need to define a few metrics related to retention and sharing.

21. Reddit recently implemented a new search toolbar, what metrics would you measure to determine the impact of the search toolbar?

To pull the best metrics for product data science questions, start with the goal of the product. In this case, Reddit’s search bar would be designed to provide the most relevant and interesting content to users. How would you measure this? A few metrics you might start with include click-through-rate (CTR), bounce rate (percentage of users who perform search but don’t click a result), and time users spend in search.

22. Traffic went down 5%. How would you determine the reason for this?

Follow up with clarifying questions like:

- What’s the timeframe? Day-over-day? Week-over-week? Month-over-month?

- How does this compare to baseline traffic?

- What type of traffic? Is it channel specific?

Then, outline a process. You’d likely start with checking for bugs or errors in the data. Was it an analytics error? Then we could look to internal and external factors like, competitor feature launches, traffic by geolocation, seasonality, similar declines in other metrics (marketing engagement, conversion rate).

23. How would you measure the success of Facebook Groups?

Facebook Groups provide a space for users to connect with other users through a shared interest or real-life/offline relationship. Additionally, the overarching business goal is to increase user engagement.

Therefore, you could start with some of the most obvious metrics like monthly active users (is it increasing or decreasing), as well as general engagement metrics like number of posts, comments, and shares.

Some others to consider would be:

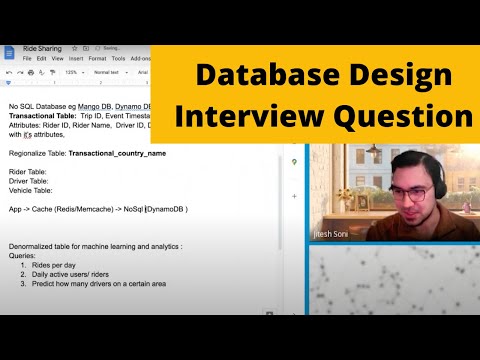

- % of users that join a group after viewing the group

- % of users that engage

- % of users that friend or follow another user of the group within one week of joining

24. You work at Twitter and are tasked with assigning negative values to abusive tweets. How would you do that?

You could build a sophisticated sentiment analysis model for a task like this. But let’s say the company doesn’t have the time to invest in that approach? Are there metrics you could use to help you measure the negativity of an abusive tweet?

25. How would you conduct a user journey analysis with the goal of improving UI for a community app forum?

Video Solution:

26. What factors could have biased this result, and what would you look into?

More context: Suppose there exists a new airline named Jetco that flies domestically across North America. Jetco recently had a study commissioned that tested the boarding time of every airline, and it came out that Jetco had the fastest average boarding times of any airline.

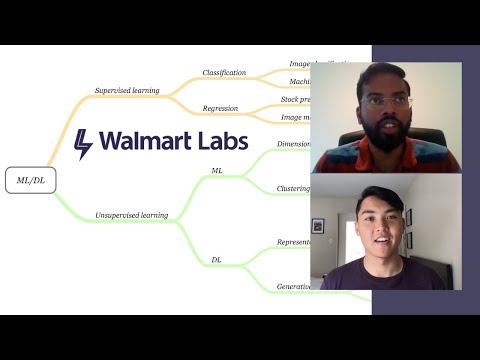

Machine Learning Interview Questions

In data science interviews, machine learning and modeling questions assess your experience using machine learning tools and depth of knowledge. Most commonly, these questions examine algorithm concepts, machine learning case studies, and recommendation engines.

27. What are the assumptions of linear regression?

With a question that asks the assumptions of linear regression, know that there are several assumptions, and that they’re baked into the dataset and how the model is built. The first assumption is that there is a linear relationship between the features and the response variable, otherwise known as the value you’re trying to predict. What else can we assume?

28. What is the difference between xgboost and random forest?

Random forest is a bagging algorithm, and in using it, you have several base learners or decision trees, which are generated in parallel and form the base learners of the bagging technique.

However, in boosting, the trees are built sequentially such that each subsequent tree aims to reduce the errors of the previous tree. Each tree learns from its predecessors and updates the residual errors. Hence, the tree that grows next in the sequence will learn from an updated version of the residuals.

29. What metrics would you use to assess accuracy and validity of a spam classifier?

Spam filtering is a binary classification problem in which the emails can either be spam or not spam. Also, be cognizant of how unbalanced the dataset might be, e.g. lots of non-spam emails vs much fewer spam emails.

With that in mind, define the classification definitions for each output scenario:

- True Positives: The email prediction is spam and the email is actually spam.

- True Negatives: The email prediction is not spam and the email is also not spam.

- False positives: The email prediction is spam and the email is actually not spam.

- False negatives: The email prediction is not spam and the email is actually spam.

Given these definitions, what would be the metrics for measuring model accuracy? See the full solution on Interview Query.

30. How would you create a system for Facebook Marketplace to detect if someone is listing a firearm?

This intermediate machine learning question was asked by Facebook. See a video mock interview solution for this question:

31. You want to build a model to predict housing prices, but 20% of the listings are missing square footage. What do you do?

This classic data modeling question was asked by Redfin and Panasonic. A quick solution– you could build models with different volumes of training data. With this method, you can avoid using the 20% that are missing values. Imputation would be another option for solving this question.

32. Is a decision tree model best for predicting if a borrower will pay back a personal loan? How would you evaluate performance of the model?

A few questions to consider are: How would you evaluate performance of the model? And how would you compare a decision tree to other models? See a full solution in this YouTube mock interview:

See our guide to the Square data scientist interview.

33. What are some differences between classification and regression models?

The key difference in regression and classification models is the nature of the data they want to predict, their output. In regression models, the output is numeric, whereas, in classification models, the output is categorical.

34. An ecommerce website’s pricing algorithm is underpricing products. What steps would you take to diagnose the problem?

See a full solution to this question on YouTube:

35. Explain K-fold cross-validation.

According to Machine Learning Mastery, K-fold cross validation is used to “estimate the skill of the model on new data.” With K-fold methods, there are various tactics you can use for selecting the value of K, and there are common variations such as stratified and repeated in scikit-learn.

36. How would you use machine learning to predict missing values for a dataset missing 30% of values?

With a question like this, start with some clarifying questions. How large is the dataset? What type of data is it? What type of problem are you trying to solve?

For example, with a large-scale dataset for a classification problem, you might use a Python package like LightGBM to impute values. Or you could use interpolation, which would use neighbor data to estimate values.

37. What is dimensionality reduction? What are the benefits of using it?

In simple terms, dimensionality reduction is the process of reducing the dimension of your feature set. For example, a dataset could have 50 features (columns), and with dimensionality reduction, we might reduce the number of features to 15.

Why would you do this? As the number of features increase, the model becomes more complex, and the risk of overfitting increases. This can result in poor performance. Some key benefits of dimensionality reduction include:

- Reduce time and storage requirements

- Improved parameter interpretation

- Noise reduction and more interpretable data

38. What is ensemble learning? How would you explain it to a nontechnical person?

Ensemble learning is an approach to machine learning that combines the predictions of multiple models to improve predictive performance. There are three main classes of ensemble learning:

- Bagging

- Stacking

- Boosting

One example would be a random forest, which is an ensemble of multiple decision trees.

39. What is variance in a model?

Variance is the measure of how much the prediction would vary if the model was trained on a different dataset, drawn from the same population. Can be also thought of as the “flexibility” of the model.

SQL Interview Questions for Data Scientists

Data science SQL interview questions are very common and asked in about 90% of data science interviews. These questions assess your ability to write clean code, usually during a whiteboarding session. Common topics include pulling metrics, analytics case studies, ETL, and database design.

40. How would you test for NULL values in a database?

NULL is usually used in databases to signify a missing or unknown value. To compare the fields with NULL values, you can use the IS NULL or IS NOT NULL operator.

41. Given three tables, representing customer transactions and customer attributes, write a query to get the average order value by gender.

For this problem, which has been asked in Uber data science interviews, note that we are going to assume that the question states average order value for all users that have ordered at least once.

Therefore, we can apply an INNER JOIN between users and transactions.

SELECT

u.sex

, ROUND(AVG(quantity *price), 2) AS aov

FROM users AS u

INNER JOIN transactions AS t

ON u.id = t.user_id

INNER JOIN products AS p

ON t.product_id = p.id

GROUP BY 1

42. Given a table with event logs, find the percentage of users that had at least one 7-day streak of visiting the same URL.

Here’s the key observation for this problem: Say that, for a given user, you sort their login dates in ascending order and assign a rank (row_num) to each date (doesn’t matter if you use RANK() or ROW_NUMBER() if the dates are unique). If you subtract corresponding ranks from dates, you’ll find that days belonging in the same streak have the same number.

43. Write a query to identify customers who placed more than three transactions each in both 2019 and 2020.

This question gives us two tables and asks us to find the names of customers who placed more than three transactions in both 2019 and 2020.

Note the phrasing of the question institutes this logical expression: Customer transaction > 3 in 2019 AND Customer transactions > 3 in 2020.

Our first query will join the transactions table to the user’s table so that we can easily reference both the user’s name and the orders together. We can join our tables on the id field of the user’s table and the user_id field of the transactions table:

FROM transactions t

JOIN users u

ON u.id = user_id

44. Given the employees and departments table, write a query to get the top three highest employee salaries by department.

In this question, we need to order the salaries by department. A window function here is useful. Window functions enable calculations within a certain partition of rows. In this case, the RANK() function would be useful. What would you put in the PARTITION BY and ORDER BY clauses?

Your window function can look something like:

RANK() OVER (PARTITION BY id ORDER BY metric DESC) AS ranks

with id and metric replaced by the fields relevant to the question

45. Write an SQL query to create a metric to recommend pages for each user based on recommendations from their friend’s liked pages.

Let’s say we want to build a naive recommender for this SQL problem. We’re given two tables: one table called friends with a user_id and friend_id columns representing each user’s friends, and another table called page_likes with a user_id and a page_id representing the page each user liked.

Let’s solve this problem by visualizing what kind of output we want from the query. Given that we have to create a metric for each user to recommend pages, we know we want something with a user_id and a page_id along with some sort of recommendation score.

Let’s try to think of an easy way to represent the scores of each user_id and page_id combo. One naive method would be to create a score by summing up the total likes by friends on each page that the user hasn’t currently liked. Then, the max value on our metric will be the most recommendable page.

46. When do you use the CASE WHEN function?

CASE WHEN is used to perform a logical test and return a specified value when the test is true. The CASE function is used to evaluate the logical expression corresponding to a set of values. When the logical expression is true, WHEN returns the specified value.

If no default value is specified, NULL is returned. Here is example syntax for CASE WHEN:

CASE [<expression>]

WHEN <value1> THEN <return value1>

WHEN <value2> THEN <return value2>

ELSE <return value3>

END

47. Write a SQL query to list all customer orders.

In this Amazon data science interview question, you’re provided a transactions table and a users table. The first step is to join the transactions and users tables with an INNER JOIN.

Then, you could create two helper columns using the CASE WHEN statement. See the full solution on Interview Query.

48. What is a foreign key? How is it used?

A foreign key is a column or group of columns that establishes a link between the data in two tables. When the foreign key is referenced, a link is created between two tables when the column or group of columns containing the primary key value for one table is referenced by the column or group of columns in another table. This column becomes a foreign key in the second table.

49. What’s the difference between an INNER JOIN and LEFT JOIN?

The INNER JOIN returns rows only when there is a match in both tables. A LEFT JOIN returns all rows from the left table, even if there is not a match.

50. Write a SQL query to return all the neighborhoods with 0 users in them.

Our predicament is to find all the neighborhoods without users. In a sense we need all the neighborhoods that do not have a singular user living in them. This means we have to introduce a concept of the existence of a column in one table, but not in the other.

51.Write a SQL query to find total distance traveled by Uber users last month.

In this question, you’re given a users and rides table.

We need to accomplish two things. One is to figure out the total distance traveled for each user_id. The second is to get the user’s name then and order by the distance traveled.

Let’s break it down into steps and tackle an easier problem first. First off, how would we get the total distance traveled for all users?

One way to do so would be with the SUM function. Since the rides table represents all of the rides, each row in the rides table represents one ride, and the distance traveled in each ride. Therefore to get the total distance traveled, we just run:

SELECT SUM(distance) FROM rides

52. Write a SQL query to get month-over-month change in revenue.

You’re given two tables: transactions and products.

Whenever there is a question month-over-month or year-over-year, etc. change in SQL, you can do that in two different ways. One is using the LAG function that is available in certain SQL services. Another is to do a sneaky join.

For both, we first have to sum the transactions and group by the month and the year.

53. What are the differences between DDL, DML, DCL, and DQL?

These stand for:

- Data Definition Language (DDL) - This includes the commands that define the database schema, including CREATE, DROP or ALTER.

- Data Query Language (DQL) - These statements perform queries on data within schema objects with the SELECT command.

- Data Manipulation Language (DML) - This includes data manipulation commands like INSERT, UPDATE and DELETE.

- Data Control Language (DCL) - This includes permissions and security commands like GRANT and REVOKE.

54. Write a query to find the user with the highest average number of unique item categories per order.

Data Science Python Interview Questions

Python interview questions test your technical coding skills, with questions that explore concepts like data structures, NumPy and data science packages, probability simulation, statistics and distributions, and string parsing / data manipulation.

55. Write a function to select only the rows from a dataframe where the student’s favorite color is green or red and their grade is above 90.

This question requires us to filter a dataframe by two conditions: 1) the grade of the student and 2) their favorite color.

Let’s start with filtering by grade since it’s a bit simpler than filtering by strings. We can filter columns in pandas by setting our dataframe equal to itself with the filter in place.

56. Write a function to generate N samples from a normal distribution and plot the histogram. You may omit the plot to test your code.

This is a relatively simple problem because we have to set up our distribution and then generate n samples from it which are then plotted. In this question, we make use of the SciPy library which is a library made for scientific computing.

First, we will declare a standard normal distribution. A standard normal distribution, for those of you who may have forgotten, is the normal distribution with mean=0 and standard deviation = 1. To declare a normal distribution, we use SciPy’s stats.norm(mean, variance) function and specify the parameters as mentioned above.

57. Amy and Brad take turns rolling a six-sided die. Whoever rolls a “6” first wins. Amy rolls first. What’s the probability that Amy wins?

In this question, we can write a Python function to simulate the scenario to see how frequently Amy wins first. To solve this question, you must understand how to create two people and simulate the scenario with one person rolling first each time.

58. Given a dictionary consisting of many roots and a sentence, write a function to stem all the words in the sentence with the root forming it.

In data science, there exists the concept of stemming, which is the heuristic of chopping off the end of a word to clean and bucket it into an easier feature set. That’s being tested in this Facebook question.

One tip: If a word has many roots that can form it, replace it with the root with the shortest length.

Example:

Input:

roots = ["cat", "bat", "rat"]

sentence = "the cattle was rattled by the battery"

Output:

"the cat was rat by the bat"

59. Given a percentile threshold and N samples, write a function to simulate a truncated normal distribution.

Example:

Input:

m = 2

sd = 1

n = 6

percentile_threshold = 0.75

Output:

def truncated_dist(m,sd,n, percentile_threshold): ->

[2, 1.1, 2.2, 3, 1.5, 1.3]

All values in the output sample are in the lower 75% = percentile_threshold of the distribution.

Hint: First, for this question, we need to calculate where to truncate our distribution. We want a sample where all values are below the percentile_threshold.

60. Write a function to list the pairs of friends with their corresponding timestamps of the friendship beginning and the timestamp of the friendship ending.

For this Facebook data science interview question, you’re given two lists of dictionaries representing friendship beginnings and endings: friends_added and friends_removed. Each dictionary contains the user_ids and created_at time of the friendship beginning /ending.

Input:

friends_added = [

{'user_ids': [1, 2], 'created_at': '2020-01-01'},

{'user_ids': [3, 2], 'created_at': '2020-01-02'},

{'user_ids': [2, 1], 'created_at': '2020-02-02'},

{'user_ids': [4, 1], 'created_at': '2020-02-02'}]

friends_removed = [

{'user_ids': [2, 1], 'created_at': '2020-01-03'},

{'user_ids': [2, 3], 'created_at': '2020-01-05'},

{'user_ids': [1, 2], 'created_at': '2020-02-05'}]

Output:

friendships = [{

'user_ids': [1, 2],

'start_date': '2020-01-01',

'end_date': '2020-01-03'

},

{

'user_ids': [1, 2],

'start_date': '2020-02-02',

'end_date': '2020-02-05'

},

{

'user_ids': [2, 3],

'start_date': '2020-01-02',

'end_date': '2020-01-05'

},

]

61. What built-in data types are used in Python?

In Python, data types classify or categorize data, and all values have a data type. Some of the most common built-in data types in Python are:

- Number (int, float and complex)

- String (str)

- Tuple (tuple)

- Range (range)

- List (list)

- Set (set)

- Dictionary (dict)

62. How is a negative index used in Python?

Negative indexes are used in Python to assess and index lists and arrays from the end of your string, moving backwards towards your first value. For example, n-1 will show the last item in a list, while n-2 will show the second to last. Here’s an example of a negative index in Python:

b = "Python Coding Fun"

print(b[-1])

>> n

63. What’s the difference between lists and tuples in Python?

Lists and tuples are classes in Python that store one or more objects or values. Key differences include:

- Syntax – Lists are enclosed in square brackets and tuples are enclosed in parentheses.

- Mutable vs. Immutable – Lists are mutable, which means they can be modified after being created. Tuples are immutable, which means they cannot be modified.

- Operations – Lists have more functionalities available than tuples, including insert and pop operations, as well as sorting.

- Size – Because tuples are immutable, they require less memory and are subsequently faster.

64. Name mutable and immutable objects.

In Python, the values of mutable objects can change, while the values of immutable objects cannot. These are the most common mutable and immutable data types in Python:

- Mutable – Lists, sets, dictionaries and byte arrays

- Immutable – Boolean, float, strings, frozen sets and tuples

65. What is the difference between print and return?

Both print and return display values, however there are key differences. For example, print in Python displays a value in the console. To do this, you use:

print()

Return in Python, on the other hand, returns a value from a function and exits the function. You use the return keyword to return a value from a function.

66. What is the difference between dataframes and matrices?

Although both are rectangular data types that store table data with rows and columns, there are key differences. The biggest difference is that matrices in Python can only contain a single class of data. Dataframes on the other hand, can contain multiple classes of data.

67. What is a Python module? How is it different from libraries?

A library in Python is a collection of modules. A module is a set of code that is used for a specific purpose. Modules are typically used to split scripts into smaller files for easier maintenance. Modules also make code easier to use in many different programs.

-> Be sure to see our full guide to Python questions in data science interviews.

Data Science Probability Interview Questions

Probability Theory underpins all of statistics and machine learning, and, in data science interviews, probability questions are useful for assessing analytical reasoning. Most commonly, you’ll be asked to calculate probability based on a given scenario.

68. What is an unbiased estimator? Give an example for a layperson.

Unbiased estimators is a statistic that’s used to approximate a population parameter. An example would be taking a sample of 1,000 voters in a political poll to estimate the total voting population. There is no such thing as a perfectly unbiased estimator.

Need some help? See our probability course for an in-depth explanation.

69. Explain how a probability distribution could be not normal and give an example scenario.

A probability distribution is not normal if most of its observations do not cluster around the mean, forming the bell curve. An example of a non-normal probability distribution is a uniform distribution, in which all values are equally likely to occur within a given range.

70. You are given two fair 6-sided dice. If the sum of values is 7, then you win $21. However, you must pay $10 per roll. Is the game worth playing?

First, we consider how many ways we can possibly roll a seven. Let D_1 and D_2 be the result of the first and second dice respectively. There are six ways that the sum (D_1+D_2)=7. See the solution on Interview Query.

71. You have a shuffled deck of 500 cards numbered 1 to 500. You pick 3 cards. What’s the probability of each subsequent being larger than the last?

Imagine this as a sample space problem, ignoring all other distracting details. If someone randomly picks three differently numbered unique cards without replacement, then we can assume that there will be a lowest card, a middle card, and a high card.

Let’s make this easy and assume we drew the numbers 1, 2, and 3. In our scenario, if we drew (1,2,3) in that exact order, then that would be the winning scenario.

But what’s the full range of outcomes we could draw? See the full solution to this LinkedIn question on Interview Query.

72. Netflix has hired people to rate movies. Assuming all raters rate the same amount of movies, what is the probability that a movie is rated good?

More context: Out of all of the raters, 80% of the raters carefully rate movies and rate 60% of the movies as good and 40% as bad. The other 20% are lazy raters and rate 100% of the movies as good.

This question is asking us what percentage of movies are being rated good. How would we formally express this probability given the information provided?

73. There is a fair coin (heads, tails) and an unfair coin (both tails). You pick one and flip it 5 times. It comes up tails 5 times. What is the chance you are flipping the unfair coin?

The solution to this question requires knowledge of Bayes’ Rule, which says:

P(U|T)P(T)=P(T|U)P(U)

Therefore, we have P(U|T) * 33⁄64 = 1 * ½. P(U|T) = ½ / (33⁄64) or 32⁄33

74. A fair six-sided die is rolled twice. What is the probability of getting 1 on the first roll and not getting 6 on the second roll?

This question is a simple calculation. The probability of rolling a 4 is 1⁄6. The probability of not rolling a 6 on the second role is 5⁄6.

Therefore, the answer is 1⁄6 * 5⁄6 = 5⁄36.

75. Say you are given an unfair coin, with an unknown bias towards heads or tails. How can you generate fair odds using this coin?

Here’s an excerpt from a full solution by Robert Eisele:

“If you have a biased coin, how can we simulate a fair coin? The naïve way would be throwing the coin 100 times, and if the coin came up heads 60 times, the bias would be 0.6. We could then conduct an experiment to cope with this bias.

A better solution was introduced by John von Neumann. We are going to consider the outcomes of tossing the biased coin twice. Let p be the probability of the coin landing hands and q be the probability of the coin landing tails – where q = 1 – p.”

76. Given draws from a normal distribution with known parameters, how can you simulate draws from a uniform distribution?

There’s a simple answer to this question called the Probability Integral Transform or Universality of the Uniform. You would plug the draw into the CDF of the normal distribution with those parameters.

77. Give examples of machine learning models with high bias.

High bias models make more assumption, and these models do not work well on new data. Some examples of high bias models include: linear regression, linear discriminant analysis, and logistic regression.

78. How many ways can you split 12 people into 3 teams of 4?

With this question, the solution requires a multinomial distribution, with n=12 and k=3.

Note: In this problem, the players are indistinguishable from each other.

See our full list of practice probability questions.

Statistics and A/B Testing Interview Questions

Statistics and A/B questions test your ability to design tests, understand the results, and perform statistical computations. Most commonly, they’ll be framed as:

- Case study - A/B testing case study questions will provide you a testing scenario and ask you to design an A/B test, assess what’s going on with a test, or measure the results.

- Experiment design - These questions test your ability to design and measure an A/B test, and include many core statistical concepts like P-value, significance, and power in testing.

79. How would you approach designing an A/B test? What factors would you consider?

In general, you should start with understanding what you want to measure. From there, you can begin to design and implement a test. There are four key aspects to consider:

- Setting Metrics - A good metric is simple, directly related to the goal at hand, and quantifiable.

- Constructing Thresholds -What degree does your key metric must change in order for the experiment to be considered successful?

- Sample Size and Experiment Length - how large of a group are we going to test on and for how long?

- Randomization and Assignment - Who gets which version of the test and when? We need at least one control group and one variant group.

80. What are MLE and MAP? What is the difference between the two?

Maximum likelihood estimation (MLE) and maximum a posterior (MAP) are methods used to estimate a variable with respect to observed data. They are both good for estimating a single variable, as opposed to a distribution.

In machine learning, you can think of this variable as a model parameter or a weight that the model can learn. These functions are used to find the optimal parameter given the training dataset. However, they are different in that they take different approaches to estimate the variables.

81. How would you explain what happened in the experiment outlined below? How would you improve the experimental design?

Overview: You are designing an experiment to measure the impact financial rewards have on user response rates. The results show that the treatment group (received a $10 reward) has a 30% response rate, while the control group (no rewards) has a 50% response rate.

See a full video solution this A/B testing experiment design problem on YouTube:

82. What do you think the distribution of time spent per day on Facebook looks like? What metrics would you use to describe that distribution?

Having the vocabulary to describe a distribution is an important skill as a data scientist when it comes to communicating ideas to your peers. There are four important concepts, with supporting vocabulary, that you can use to structure your answer to a question like this. These are:

- Center (mean, median, mode)

- Spread (standard deviation, inter quartile range, range)

- Shape (skewness, kurtosis, uni or bimodal)

- Outliers (Do they exist?)

See a full solution for this problem on Interview Query.

83. What metrics would you analyze and what statistical methods would you use to identify athletic anomalies indicative of a dishonest user on a sports tracking app?

More context: Suppose you work for a company whose main product is a sports app that tracks and displays running/jogging/cycling data for its users. Some metrics tracked by the app are distance, pace, splits, elevation gain, and heart rate.

You’re given the task of formulating a method to identify dishonest or cheating users – such as users who drive a car while claiming they’re on a bike ride.

Try this question on Interview Query.

84. How would you make a control group for the close friends feature on Instagram Stories and test group to account for network effects?

Usually, A/B test design starts with randomly dividing users into two groups. Then, you give each group a different version of the product, and look for differences in behavior between the groups. Random assignment is done on a per-user basis, typically. Unfortunately, this standardized method doesn’t work well in this A/B testing case question for Instagram Stories. Why is that?

85. What significance level would you target in an A/B test?

With A/B tests, there are two parameters determine the risk of decisions based on the test’s results. They are significance level and power level. As a general rule, most target a significance level of 95%, however some may consider a range between 90-99%. Generally, the power level is targeted at 80%.

86. How would you explain what a p-value is to someone who is not technical?

The p-value is the probability of observing results as least as extreme as your observed results, if your hypothesis is true. When this probability is very low, it indicates that there’s significant evidence that your hypothesis is not true, simply because observing that much of an extreme result has a very low probability of occurring.

87. What does it mean for a function to be monotonic?

A function is monotonic when it is strictly increasing or decreasing. The monotonicity of a function is related to its derivative. When the derivative is positive, it is increasing, and when it is negative, it is decreasing.

88. What are some alternatives to A/B testing for UI decisions?

If you’re looking for an alternative to A/B testing, there are two common tests that are used to make UI design decisions. They include:

- A/B/N Tests - This type of test compares several different versions at once. (The N stands for “number,” e.g. the number of variations being tested.) This type of test is best for testing major UI design choices.

- Multivariate - This type of test compares multiple variables at once, e.g. all the possible combinations that can be used. Multivariate testing saves time, as you won’t have to run dozens of A/B tests. This type of test is best when several UI design changes are being considered.

89. How is statistical significance assessed?

P-value is used to determine statistical significance. If the p-value falls below the significance level, then the result is statistically significant.

90. What does selection bias mean?

Selection bias refers to using data that has not been properly randomized.

91. Let’s say that you are working as a data scientist at Amazon Prime Video, and they are about to launch a new show, but first want to test the launch on only 10,000 customers first

- How do we go about selecting the best 10,000 customers for the pre-launch?

- What would the process look like for pre-launching the TV show on Amazon Prime to measure how it performs?

Business Case Interview Questions

In data science interviews, business case questions provide a scenario and test your problem-solving approach. You’ll be provided with a dataset and problem, and then you’ll be asked to investigate and offer solutions to the problem.

92. DoorDash just launched in NYC and Charlotte. How would you choose Dashers (delivery drivers) for these new cities?

First, think about the criteria? Would it be the same for both cities? Certainly not. That’s because they are very different in terms of density; dashers in NYC might prefer bikes, while Charlotte’s dashers might prefer cars. See a full solution to this question on Interview Query.

93. You want to remove duplicate product names from a very large ecommerce database. How would you go about doing this?

More context: The duplicates may be listed under different sellers, names, etc. For example, “iPhone X” and “Apple iPhone 10” are two names that mean the same iPhone.

See a full mock interview solution for this question on YouTube:

94. Let’s say you are a data scientist on the marketing team for Spotify. How would you determine how much Spotify should pay for an ad in a third party app?

Start with some clarifying questions. How large is the user base of this third-party app? What are the user demographics? Additionally, you’d want to learn some more about Spotify’s marketing strategy, e.g. how much you spend on similar campaigns, the goals of the ad campaign, target CPA (if that’s the goal).

Then, you can start to estimate pricing based on probable conversion rates.

95. How would you determine if the price of a Netflix subscription is truly the deciding factor for a consumer?

See a full solution for this business case question on YouTube:

96. How would you measure the success of Facebook Groups?

One thing that helps with product questions is to go back to the raw understanding of what the product feature is for.

Facebook groups provide a way for Facebook users to connect with other users through a shared interest or real-life/offline relationship. This space enables these Facebook users to easily socialize in a group-like setting; planning events, discussing topics etc. Facebook also allows groups to be private/closed off or more open and public facing.

In this case, the user’s goal is to experience a sense of community. By doing so, we derive our business goal of increasing user engagement with Facebook Groups. What general engagement metrics we can associate with this value?

97. Determine average lifetime value of a customer based on provided criteria.

Let’s say you’re asked to calculate LTV for a company that’s been in operation for less than one year.

Average lifetime value is defined by the prediction of the net revenue attributed to the entire future relationship with all customers averaged. Given that we don’t know the future net revenue, we can estimate it by taking the total amount of revenue generated divided by the total number of customers acquired over the same period time.

So given that we know that the average customer length on the platform is 3.5 months, couldn’t we just calculate the LTV as $100 * 3.5 = $350? Not exactly.

This is a pretty short time for a business to be around when the lifetime subscription of a customer could be much longer. Our average customer length is then biased based on the fact that the business hasn’t been around long enough to correctly measure a sample average that is indicative of the true mean.

98. Is a 50% discounted rider promotion a good or bad idea? How would you measure the promotion?

This is a question with many paths and no correct right answer. Rather the interviewer is looking towards how you can explain your reasoning when facing a practical product question.

The first assumption is that the goal of the discount is to increase retention and revenue. Let’s also assume that this discount is going to be applied uniformly across all users of time length on the platform (i.e. not targeting new users). Lastly let’s assume that the 50% discount is applied on only one ride.

Now that we’ve stated our assumptions, let’s figure out how we can evaluate the change. Since the question prompts a feature change in the form of pricing, this means we can propose an AB test and set metrics for evaluation of the test. Our AB test would be designed with a control and a test group, with only new users getting bucketed into either variant. The test group would receive a 50% rider discount on one ride and the control group would not.

What comes next?

99. What metrics would you look at to estimate demand for a ride-sharing app?

Product questions ask you to pull metrics. In this case, what metrics could help us estimate demand for rides? Some metrics you might choose include:

- App opens

- Session duration (app open to booking)

- Bookings

- % of users who open app and can’t find a driver

- Nearby drivers

- Conversion rates

- Waiting time for pick-ups

- Rider volume per ride

100. How would you approach forecasting revenue for the coming year?

Suppose you’re on Facebook’s revenue forecasting team. How would you estimate revenue? First, start with some clarifying questions:

- Is this revenue for all of Facebook or just a certain division? Let’s say it’s for all of Facebook.

- How much previous data do we have? At this point, we can say almost 20 years.

- What is this revenue forecast being used for?

To set up our forecast model, we want to look at historical revenue data for Facebook. We should consider revenue streams from the different services Facebook offers. For example, if the three primary revenue streams are Newsfeed, Messenger, and Marketplace, we can analyze revenue from the past five years from each product individually instead of in aggregate.

Next, we want to look for certain attributes like seasonality and trends. What comes next?

Database Design and Engineering

Database design questions are asked to test your knowledge of data architecture and design. In data science interviews, database design questions will usually ask you to design a database from scratch for a provided application or business idea.

101. What are the features of a physical data model?

Here’s an overview of the features in physical models:

- Specifying all tables and columns

- Foreign keys to identify relationships between tables

- Denormalization may occur based on user requirements.

- Physical considerations may cause the physical data model to be quite different from the logical data model.

- Physical data model will be different for different RDBMS. For example, data type for a column may be different between MySQL and SQL Server.

102. Explain a challenging data modeling project you have worked on.

With a question like this, focus on outlining the project and the unique challenges of the project. For example, if you worked with a healthcare company, patient privacy could have been a potential problem. Additionally, explain all of the entities that were linked and your process for designing the data model.

103. Let’s say you’re setting up the analytics tracking for a web app. How would you create a schema to represent client click data on the web?

A simple but effective design schema for this question would be to first represent each action with a specific label. In this case, assigning each click event a name or label describing its specific action.

For example, let’s say the product is Dropbox and we want to track each folder click on the UI of an individual person’s Dropbox. We can label the clicking on a folder as an action name called folder_click. When the user clicks on the side panel to login or logout and we need to specify the action, we can call it login_click and logout_click.

104. How would you design a database that could record rides between riders and drivers for a ride-sharing app? What would the table schema look like?

See the full solution for this database design question on YouTube:

105. Design the database schema to support a Yelp-like app.

More context: The application will allow anyone to review restaurants. This app should allow users to sign up, create a profile, and leave reviews on restaurants if they wish. These reviews can include texts and images. A user can only write one review for a restaurant, but can update their review later on.

The two main tables that can support the application functions would be a user table & a review table. You can include an additional restaurants table to normalize the restaurant information better.

106. What are the responsibilities of an ETL tester?

An ETL tester is responsible for running extensive tests on ETL software. In particular, the tester will create and design and execute test cases and test plans. Testers also identifies problems and suggestion solutions, as well as approves the design specification for the ETL system.

107. Explain how you would build a system to track changes in a database.

You can track changes in a database by creating a separate table that gets entries added via triggers when INSERT, UPDATE or DELETE statements are used. This is the most common way, and it covers general changes.

You would also want to track changes by user. There are many ways to do this, but one would be to have a separate table that inserts a record every time a user updates data. This would record the user, time, and the ID of the changed record.

108. How would you design the data model for the notification system of a Reddit-style app?

This is a classic data engineering case study question, and your goal is to walk the interviewer through a data model. Here, start with a clarification about the notifications. Typically, you’ll have two types:

- Trigger-based notificationsFor example, let’s say a user gets a comment reply on one of their posts. We want to send an email notification to the user in real-time. These are event-based.

- Scheduled notifications - The app wants to push engagement and chooses to send out targeted content notifications to users. These are user triggered.

Next, walk the interviewer through how you would design a simple database for notifications. This database might include two tables:

- Notifications - This provides details about different types of notifications.

- Name - the name of the notification

- Type - the type of the notification; in this case, “user” or “event”

- Notification Metrics - This would provide metrics about the notifications

- Time sent

- Events - like reads, clicks, deliveries, etc.

109. What is a conceptual data model? What is a physical model?

A conceptual data model is a high-level model that includes the entities stored in a database, as well the relationships between these entities. Typically, the details about each entity isn’t included in a conceptual model.

A physical model on the other hand is a model of the data that uses table and column names, as well as data types and constraints.

110. What schema is better: Star or snowflake?

Start with a clarifying question about the data that is being stored. Star schemas are generally simpler and are useful for datamarts, due to the simpler relationships. In the star schema, one or more fact tables are used to index a series of dimension tables.

Snowflake schemas use less space to store dimension tables but are generally more complex. They also have no redundant data and are easier to maintain. Snowflake schemas are better for data warehouses.

Additional Data Science Interview Resources

Join Interview Query today and begin preparing for your interview.

Members have access to:

Gain insight access to all of these resources when you join.